Query SQL data sources

This page documents an earlier version of InfluxDB OSS. InfluxDB 3 Core is the latest stable version.

API token hashing is enabled by default in InfluxDB OSS 2.9.0

Stronger token security: tokens are stored as hashes on disk, so a copy of the database file doesn’t expose usable tokens. Existing tokens are hashed on first startup and the original strings can’t be recovered afterward — capture any plaintext tokens you still need before you upgrade.

For more information, see Token hashing.

The Flux sql package provides functions for working with SQL data sources.

sql.from() lets you query SQL data sources

like PostgreSQL, MySQL,

Snowflake, SQLite,

Microsoft SQL Server,

Amazon Athena and Google BigQuery

and use the results with InfluxDB dashboards, tasks, and other operations.

- Query a SQL data source

- Join SQL data with data in InfluxDB

- Use SQL results to populate dashboard variables

- Use secrets to store SQL database credentials

- Sample sensor data

If you’re just getting started with Flux queries, check out the following:

- Get started with Flux for a conceptual overview of Flux and parts of a Flux query.

- Execute queries to discover a variety of ways to run your queries.

Query a SQL data source

To query a SQL data source:

- Import the

sqlpackage in your Flux query - Use the

sql.from()function to specify the driver, data source name (DSN), and query used to query data from your SQL data source:

import "sql"

sql.from(

driverName: "postgres",

dataSourceName: "postgresql://user:password@localhost",

query: "SELECT * FROM example_table",

)import "sql"

sql.from(

driverName: "mysql",

dataSourceName: "user:password@tcp(localhost:3306)/db",

query: "SELECT * FROM example_table",

)import "sql"

sql.from(

driverName: "snowflake",

dataSourceName: "user:password@account/db/exampleschema?warehouse=wh",

query: "SELECT * FROM example_table",

)// NOTE: InfluxDB OSS and InfluxDB Cloud do not have access to

// the local filesystem and cannot query SQLite data sources.

// Use the Flux REPL to query an SQLite data source.

import "sql"

sql.from(

driverName: "sqlite3",

dataSourceName: "file:/path/to/test.db?cache=shared&mode=ro",

query: "SELECT * FROM example_table",

)import "sql"

sql.from(

driverName: "sqlserver",

dataSourceName: "sqlserver://user:password@localhost:1234?database=examplebdb",

query: "GO SELECT * FROM Example.Table",

)For information about authenticating with SQL Server using ADO-style parameters, see SQL Server ADO authentication.

import "sql"

sql.from(

driverName: "awsathena",

dataSourceName: "s3://myorgqueryresults/?accessID=12ab34cd56ef®ion=region-name&secretAccessKey=y0urSup3rs3crEtT0k3n",

query: "GO SELECT * FROM Example.Table",

)For information about parameters to include in the Athena DSN, see Athena connection string.

import "sql"

sql.from(

driverName: "bigquery",

dataSourceName: "bigquery://projectid/?apiKey=mySuP3r5ecR3tAP1K3y",

query: "SELECT * FROM exampleTable",

)For information about authenticating with BigQuery, see BigQuery authentication parameters.

See the sql.from() documentation for

information about required function parameters.

Join SQL data with data in InfluxDB

One of the primary benefits of querying SQL data sources from InfluxDB is the ability to enrich query results with data stored outside of InfluxDB.

Using the air sensor sample data below, the following query joins air sensor metrics stored in InfluxDB with sensor information stored in PostgreSQL. The joined data lets you query and filter results based on sensor information that isn’t stored in InfluxDB.

// Import the "sql" package

import "sql"

// Query data from PostgreSQL

sensorInfo = sql.from(

driverName: "postgres",

dataSourceName: "postgresql://localhost?sslmode=disable",

query: "SELECT * FROM sensors",

)

// Query data from InfluxDB

sensorMetrics = from(bucket: "example-bucket")

|> range(start: -1h)

|> filter(fn: (r) => r._measurement == "airSensors")

// Join InfluxDB query results with PostgreSQL query results

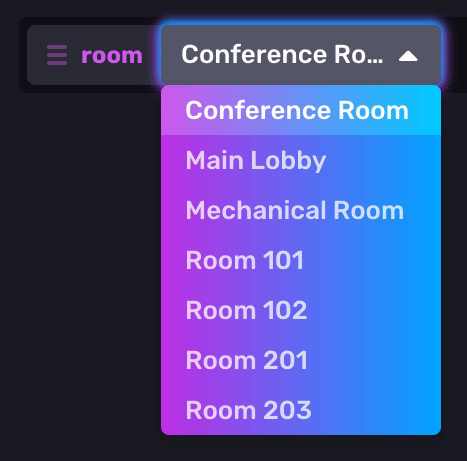

join(tables: {metric: sensorMetrics, info: sensorInfo}, on: ["sensor_id"])Use SQL results to populate dashboard variables

Use sql.from() to create dashboard variables

from SQL query results.

The following example uses the air sensor sample data below to

create a variable that lets you select the location of a sensor.

import "sql"

sql.from(

driverName: "postgres",

dataSourceName: "postgresql://localhost?sslmode=disable",

query: "SELECT * FROM sensors",

)

|> rename(columns: {location: "_value"})

|> keep(columns: ["_value"])Use the variable to manipulate queries in your dashboards.

Use secrets to store SQL database credentials

If your SQL database requires authentication, use InfluxDB secrets to store and populate connection credentials. By default, InfluxDB base64-encodes and stores secrets in its internal key-value store, BoltDB. For added security, store secrets in Vault.

Store your database credentials as secrets

Use the InfluxDB API or the influx CLI

to store your database credentials as secrets.

curl --request PATCH http://localhost:8086/api/v2/orgs/<org-id>/secrets \

--header 'Authorization: Token YOURAUTHTOKEN' \

--header 'Content-type: application/json' \

--data '{

"POSTGRES_HOST": "http://example.com",

"POSTGRES_USER": "example-username",

"POSTGRES_PASS": "example-password"

}'To store secrets, you need:

# Syntax

influx secret update -k <secret-key>

# Example

influx secret update -k POSTGRES_PASSWhen prompted, enter your secret value.

You can provide the secret value with the -v, --value flag, but the plain text

secret may appear in your shell history.

influx secret update -k <secret-key> -v <secret-value>Use secrets in your query

Import the influxdata/influxdb/secrets package and use string interpolation

to populate connection credentials with stored secrets in your Flux query.

import "sql"

import "influxdata/influxdb/secrets"

POSTGRES_HOST = secrets.get(key: "POSTGRES_HOST")

POSTGRES_USER = secrets.get(key: "POSTGRES_USER")

POSTGRES_PASS = secrets.get(key: "POSTGRES_PASS")

sql.from(

driverName: "postgres",

dataSourceName: "postgresql://${POSTGRES_USER}:${POSTGRES_PASS}@${POSTGRES_HOST}",

query: "SELECT * FROM sensors",

)Sample sensor data

The air sensor sample data and

sample sensor information simulate a

group of sensors that measure temperature, humidity, and carbon monoxide

in rooms throughout a building.

Each collected data point is stored in InfluxDB with a sensor_id tag that identifies

the specific sensor it came from.

Sample sensor information is stored in PostgreSQL.

Sample data includes:

Simulated data collected from each sensor and stored in the

airSensorsmeasurement in InfluxDB:- temperature

- humidity

- co

Information about each sensor stored in the

sensorstable in PostgreSQL:- sensor_id

- location

- model_number

- last_inspected

Download sample air sensor data

Create a bucket to store the data.

Create an InfluxDB task and use the

sample.data()function to download sample air sensor data every 15 minutes. Write the downloaded sample data to your new bucket:import "influxdata/influxdb/sample" option task = {name: "Collect sample air sensor data", every: 15m} sample.data(set: "airSensor") |> to(org: "example-org", bucket: "example-bucket")Query your target bucket after the first task run to ensure the sample data is writing successfully.

from(bucket: "example-bucket") |> range(start: -1m) |> filter(fn: (r) => r._measurement == "airSensors")

Import the sample sensor information

Download the sample sensor information CSV.

Use a PostgreSQL client (

psqlor a GUI) to create thesensorstable:CREATE TABLE sensors ( sensor_id character varying(50), location character varying(50), model_number character varying(50), last_inspected date );Import the downloaded CSV sample data. Update the

FROMfile path to the path of the downloaded CSV sample data.COPY sensors(sensor_id,location,model_number,last_inspected) FROM '/path/to/sample-sensor-info.csv' DELIMITER ',' CSV HEADER;Query the table to ensure the data was imported correctly:

SELECT * FROM sensors;

Import the sample data dashboard

Download and import the Air Sensors dashboard to visualize the generated data:

View Air Sensors dashboard JSON

For information about importing a dashboard, see Create a dashboard.

Was this page helpful?

Thank you for your feedback!

Support and feedback

Thank you for being part of our community! We welcome and encourage your feedback and bug reports for InfluxDB OSS v2 and this documentation. To find support, use the following resources:

Customers with an annual or support contract can contact InfluxData Support.